Software as a Disease

Posted on November 7, 2016 in life

The 2016 edition of the Vendée Globe race started last Sunday. 29 sailors on 60ft machines, alone around the world for more than 60 days. Their chance of making it home safe depends on the level of preparation of their boats, the excellence of their design, the hours invested by whole teams in every little tiny details, the repetition of tests. This is real life: the list of those who "did not finish" is long and includes a few sad "lost at sea" entries.

Meanwhile, an online edition of the race started on the Virtual Regatta platform, and the start did not went as smoothly as the real life one. As posted on the news section of the site:

"We just had a difficult start.

Dear sailors,

Mysql sessions were not properly closed by Amazon Web Services, which did an overloading of connections to the databases.

Our team did everything possible to overcome the damage. But first of all, we would like to thank you all for your understanding and for the kind support messages you sent us during these long hours."

Vendée starts have practically always been difficult on Virtual Regatta (not to mention other oddities...), due to technical issues. A situation that has traditionnally been rather poorly managed by the team, to the point they had to close their forums for a while in the past, when things went out of control under user's anger.

But this post is not about VR.

Read the Comments

What is fascinating is the comments to the news post. Putting aside the bunch of unhappy people who could not connect, could not start, are encountering weird issues with the site, etc. we find these:

"[They] are mostly victims of their own success and of computer issues they are not responsible for."

"More than 250k ppl at the same time on the same site [...] asks for some concessions."

"Bugs are a consequence of success."

"Sorry for bugs but they did not do it on purpose."

"They do the best they can, technical issues can occur even if everything is ready."

Is it not utterly scary to see that, for some people, bugs are an inherent part of software?

Building a Myth

I have studied theoretical computer science. I remember learning how to prove, in the mathematical sense of the term, that an algorithm was correct. Marveling at the millions (at that time) of transistors in a CPU that would just work. Reading about Donald Knuth Reward Checks.

So, somehow, when a platform consistently fails to perform... I tend to think that someone is to blame. Not in the personal sense: it is more about acknowledging the failure, the fact that things should not fail, and that they need to be fixed.

And yet the greatest achievement of the software industry seems to have been able to manage to convince people that what we do is a few order of magnitude more complex than rocket science, and is bound to fail. Add a few magic words (hey, "MySql sessions were not properly closed!") and people will truly admire you for your efforts at mastering the beast.

It's a story that has been repeated so many times that people have learned to accept problems from computers, that they would absolutely not tolerate from anything else.

Should Everyone Code ?

I initially found the "everyone should learn to code" initiatives a bit... strange. Should everyone learn to play the piano, fly a helicopter or milk a cow? However—I am now wondering. Should everyone learn to code, just for the sake of understanding how stupid and deterministic it can be? How, if done right, it should just work and how, in any case, bugs after bugs is a failure that demands humility? Just to be able to refuse the lame excuses and not accept bugs as an inevitable part of the system?

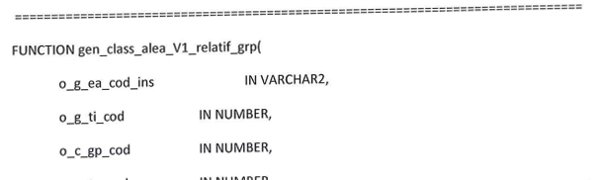

So that they could have a good laugh when the French government shamelessly releases this gem...

...as the source code of the system in charge of orienting students to the right school?

Or Give Up To AI?

That would be like asking everybody to learn how to carve wood, so they can understand how boring modern furnitures are. However interesting I think coding is, I still am not sure everyone should code. So where do we go from now? I see two directions.

-

Some software leaders provide enough quality (when was the last time the Amazon website crashed on you?) that people at last take that quality for granted and demand it from all services. Computers remain tools, and people demand tools that work the way they demand their car to start every morning. They don't buy into lame excuses anymore and walk with their feet. Bad software dies.

Or,

-

Software complexity keeps growing, especially with the adoption of "learning algorithms" and neural nets and other processes that just cannot explain how they reach a conclusion. It becomes harder and harder to justify the outputs from the inputs. We learn to accept that "things happen", autonomous cars sometimes crash, and our phone has "a disease".

Combining this with the Eliza experiment, can we conclude that true AI will be achieved the day we accept our computer is sick, and feel sorry for it?

Going even further: in these days where people try to fill their inner void, and deal with they primal fears of not existing enough or not being needed, by contemplating themselves existing in Facebook's mirror... how long before someone realizes that they would love their computer better if the computer exposed more human weaknesses?

What do you think?

Update: the VR fiasco has been picked by a few French media (eg Ouest France or L'Express). It looks like their forums went down again this morning.

There used to be Disqus-powered comments here. They got very little engagement, and I am not a big fan of Disqus. So, comments are gone. If you want to discuss this article, your best bet is to ping me on Mastodon.